Best Practices for Effective Data Quality Management

October 29, 2025

Best Practices for Effective Data Security Management

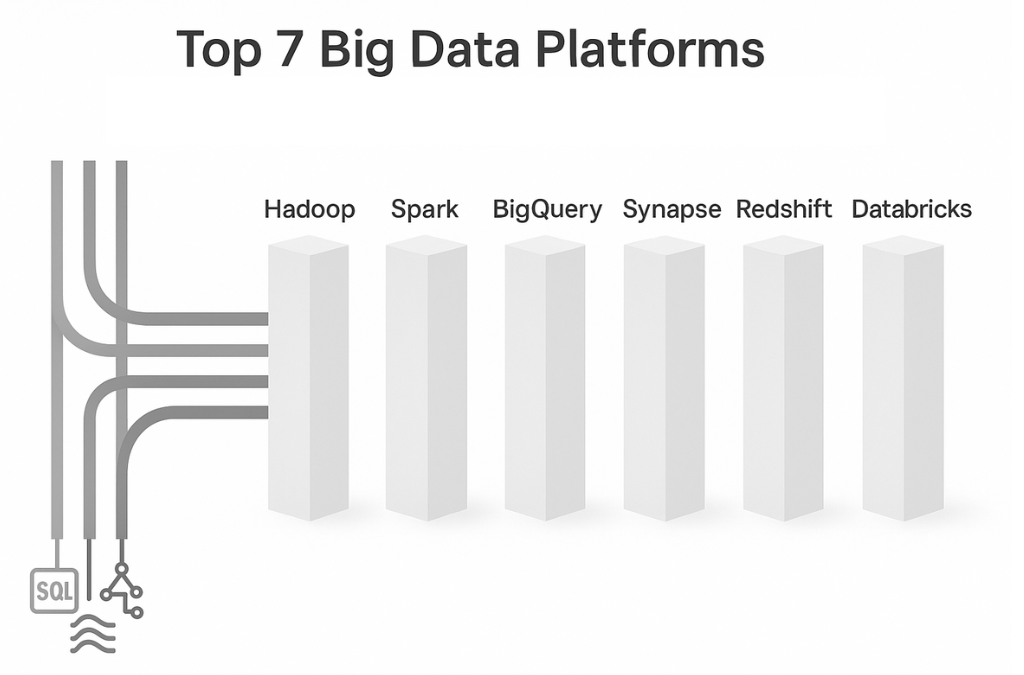

November 4, 2025Top 7 Big Data Platforms

In a world where every team is collecting more data than last year, the platform you choose shapes speed, cost, and the insights you can deliver. This guide walks you through what big data platforms are, how to choose one that fits your needs, and a clear, actionable view of seven leading options. The goal is simple: help you move from research to a short list.

What Are Big Data Platforms?

Big data platforms are toolsets that ingest, store, process, and analyze large or fast-moving datasets. They combine storage, compute, and analytics so teams can turn raw logs, events, and records into reports, models, and applications.

Typical capabilities include:

Storage: data lake or warehouse, columnar or object storage.

Processing: batch and streaming, SQL and code-based.

Analytics: BI, ad hoc SQL, machine learning.

Scale and reliability: handle growth without performance loss.

Top Platforms, Actionable Reviews

Below, each platform uses the same structure. This improves scannability and flow, so you can compare like for like.

1) Apache Hadoop

Hadoop is an open source ecosystem for storing and processing very large datasets across clusters of commodity machines. It was designed for scale and durability, so you can keep petabytes of raw data in HDFS and run heavy batch jobs on top of it without needing expensive proprietary hardware. Most teams use it to build on-premises or hybrid data lakes for compliance, long term retention, or cost control, especially in industries like finance and telecom where data cannot freely leave the environment.

Best for: large-scale on-premises or hybrid data lakes, compliance-heavy environments, long-running batch.

Core components: HDFS for storage, YARN for resource management, MapReduce and Hive for batch SQL, plus ecosystem tools like HBase and Oozie.

Strengths: mature ecosystem, fine-grained control, hardware-level cost leverage at scale.

Tradeoffs: higher ops burden, slower iteration for ad hoc analytics compared with cloud warehouses, complex upgrades.

Skill stack: Linux administration, Hadoop ops, SQL, Java or Scala for jobs.

2) Apache Spark

Spark is a distributed compute engine that handles ETL, streaming, analytics, and machine learning in one runtime. It keeps data in memory during execution, which makes it far faster than traditional MapReduce for iterative workloads like feature engineering or real time enrichment. Teams use Spark when they want one engine to power both pipelines and ML training, instead of stitching together separate tools.

Best for: fast batch, streaming, and ML that benefits from in-memory compute.

Core components: Spark SQL, Structured Streaming, MLlib, running on clusters managed by YARN, Kubernetes, or managed services.

Strengths: unified engine for ETL, streaming, and ML, strong API support for Python, Scala, Java, SQL.

Tradeoffs: cluster tuning required for peak performance, costs can spike if jobs are not optimized.

Skill stack: Python or Scala, SQL, distributed computing concepts

3) Google BigQuery

BigQuery is a fully managed, serverless cloud data warehouse from Google. You load data, write SQL, and Google handles scaling, performance, and infrastructure behind the scenes. Storage and compute are separated, so you can keep large historical data sets parked cheaply, and then pay only for the queries and workloads you actually run. Teams pick BigQuery when they want fast analytics with minimal ops, especially if they are already in Google Cloud.

Best for: serverless SQL analytics at petabyte scale, low ops, quick time to insight.

Core components: BigQuery storage and compute, integration with BigLake, Dataflow for pipelines, Looker for BI.

Strengths: separation of storage and compute, near-zero maintenance, strong ecosystem integrations.

Tradeoffs: egress and cross-cloud moves add cost, concurrency and slot management need planning for heavy workloads.

Skill stack: SQL first, optional Python for pipelines and ML.

4) Microsoft Azure Synapse Analytics

Azure Synapse is a unified analytics platform on Azure that brings data warehouse style SQL engines and Apache Spark into a single workspace. You can ingest data, transform it, query it, secure it, and visualize it with Power BI using shared identity, shared governance, and shared storage. It is attractive for teams that already live in the Microsoft world, for example SQL Server, Azure Active Directory, and Power BI adoption in the business.

Best for: Microsoft-centric stacks that want a unified experience with Power BI and Azure ML.

Core components: dedicated SQL pools, serverless SQL, Apache Spark pools, tight integration with Azure Data Lake Storage and Power BI.

Strengths: single workspace across SQL, Spark, and orchestration, strong identity and security alignment with Azure AD.

Tradeoffs: service choice matters, dedicated versus serverless needs careful sizing, mixed workloads require governance to avoid noisy neighbors.

Skill stack: SQL and Power BI, Spark skills for advanced pipelines, Azure administration.

5) AWS Big Data Tools, Redshift and EMR

On AWS, analytics is usually built from a few core services working together. Redshift is the managed cloud data warehouse, built for high performance SQL analytics. EMR is the managed big data platform that runs open source engines like Hadoop and Spark on demand. S3 is the durable, low cost storage layer at the center. This lets you design a lake plus warehouse model inside AWS without leaving the ecosystem.

Best for: AWS-native environments that need both a warehouse and flexible compute for ETL and ML.

Core components: Amazon Redshift for warehousing, Amazon EMR for managed Hadoop and Spark, S3 for storage, Glue for metadata and ETL.

Strengths: deep integration with the AWS stack, granular security and networking controls, mature tooling.

Tradeoffs: configuration choices impact cost and performance, cross-service data movement needs design to control spend.

Skill stack: SQL for Redshift, Spark or Hive for EMR, AWS networking and IAM.

6) Snowflake

Snowflake is a cloud native data platform built to simplify warehousing, analytics, and data sharing across teams and even across companies. Storage is centralized, and compute is split into virtual warehouses that you can scale up, scale down, pause, or isolate per workload. This makes it easy for different teams, for example finance and marketing, to query the same governed data without stepping on each other’s performance.

Best for: multi-cloud, low-ops SQL analytics, easy workload isolation with virtual warehouses.

Core components: centralized storage, independent compute clusters, features like Time Travel, tasks and streams, marketplace for data sharing.

Strengths: near-zero maintenance, simple scaling, strong data sharing and governance features across clouds.

Tradeoffs: vendor-specific features increase lock-in, Snowflake now provides native ML for feature management, model training, model registry, and model serving, though very large or specialized training may still require external compute, costs rise with always-on warehouses.

Skill stack: SQL first, optional Python for tasks, connectors for ELT tools.

7) Databricks

Databricks is a lakehouse platform built on Apache Spark and Delta Lake. It tries to remove the old split between data lake and data warehouse by letting you store data once in open formats, then run analytics, real time streaming, and machine learning all in one governed environment. Engineering and data science teams like it because notebooks, jobs, lineage, models, and governance live in one place instead of being stitched together.

Best for: lakehouse architecture that blends SQL analytics, streaming, and ML on one platform.

Core components: Unity Catalog for governance, Delta Lake for storage format, SQL warehouses, collaborative notebooks, MLflow for MLOps.

Strengths: strong performance for both BI and ML, governed lakehouse pattern, rich developer experience.

Tradeoffs: more features to learn, cost control needs workspace governance, SQL-only teams face a learning curve.

Skill stack: SQL, Python or Scala, data engineering and MLOps practices.

Honorable Mention: Lestar

Lestar is an AI driven data platform from Mandrill Tech that centralizes business data into a single repository, then layers dashboards, anomaly detection, and a generative AI chatbot for conversational retrieval. It offers focused modules for ESG reporting and executive finance monitoring, helping teams consolidate inputs, predict trends, and surface issues in near real time.

Best for: organizations that want out of spreadsheets and siloed tools into a centralized system for ESG disclosure, audit ready tracking, and finance visibility with AI assisted querying.

Core components: centralized data repository and dashboards, ESG module with audit trail and trend prediction, CEO 360 module with real time insights and 24 by 7 monitoring, anomaly detection and alerting, generative AI chatbot for data retrieval.

Strengths: single source of truth for ESG and finance, fast visualization and reporting, conversational access to data that reduces BI queue time, governance aids like audit trails.

Tradeoffs: narrower scope than general purpose lakehouse or warehouse platforms, emphasize on ESG and finance use cases.

Conclusion

There is no single best platform, there is a best fit for your workloads, skills, and budget. Use the profiles above to align latency targets, data gravity, governance needs, cloud preference, and team experience with the right tools. If you want low-ops SQL analytics, look at Snowflake or BigQuery. If you are Microsoft-centric, consider Synapse. If you are deep in AWS, pair Redshift with EMR and S3. If you need one place for BI, streaming, and ML, Databricks or Spark fits well. If you must stay on-premises or in a strict hybrid, Hadoop still delivers. For ESG and executive finance scenarios, Lestar adds focused modules and conversational access on top of your data.