AI Agent Use Cases

October 27, 2025

Top 7 Big Data Platforms

October 31, 2025Best Practices for Effective Data Quality Management

Strong decisions start with strong data. Data quality management is how an organization keeps data accurate, consistent, and useful from the moment it is created to the moment it is retired. This guide explains what it is, why it matters, how it works in practice, and how to keep improving over time.

What Is Data Quality Management

Data quality management is the coordinated set of policies, roles, processes, and tools that keep data accurate, reliable, and consistent. It includes preventive work such as setting standards and validating data at entry, and corrective work such as cleansing and deduplicating records. Typical activities include profiling to understand current quality, validation to block errors, cleansing to remove them, and defining clear standards and KPIs so everyone knows what “good” looks like. The goal is simple, make data trustworthy so teams can use it with confidence.

Why Data Quality Management Matters

Good data quality reduces waste, improves decisions, and lowers risk. Poor quality does the opposite, it creates rework, bad forecasts, compliance issues, and unhappy customers. In a competitive market, clean data becomes an advantage because it speeds up analysis, stabilizes automation, and builds trust with stakeholders. It also strengthens compliance, improves efficiency by cutting time spent on fixes, and supports better customer experiences through consistent and personalized interactions.

Key Dimensions of Data Quality

Each dimension highlights a different way data can go right or wrong. Focus on accuracy, the data matches reality, completeness, required fields are present, consistency, values agree across systems, timeliness, data is available when needed, validity, values follow rules and formats, uniqueness, no unintended duplicates, and relevance, the data serves a clear business need. Use these dimensions to set targets, measure progress, and guide remediation.

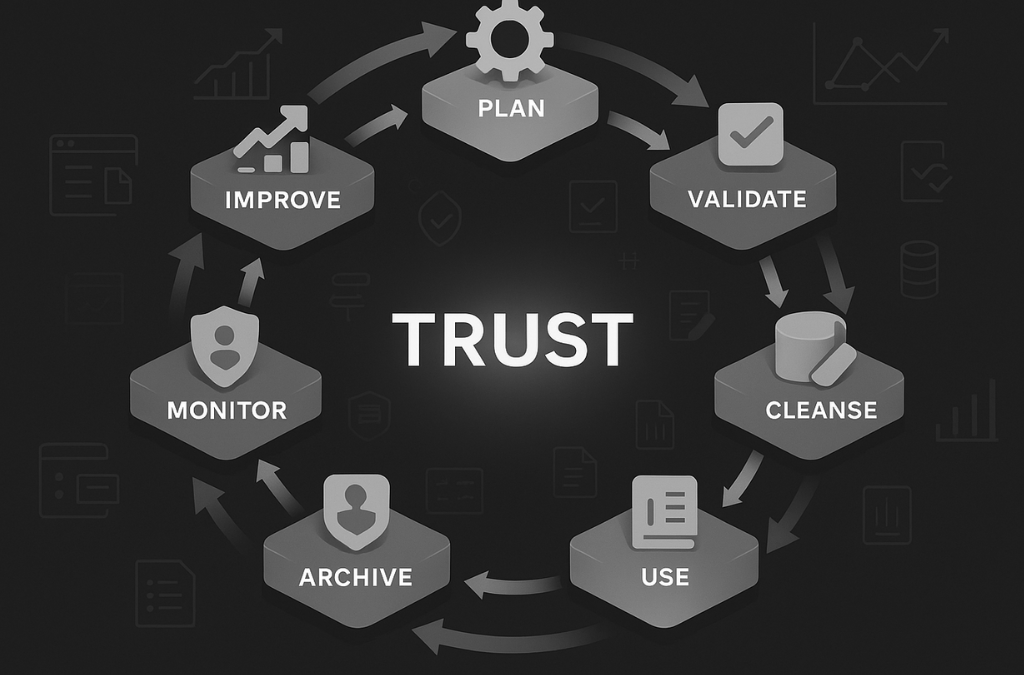

The Data Quality Management Lifecycle

Quality is not a one-time fix, it is a loop. Start by planning and defining standards and ownership. Collect and create data through forms and integrations that prevent errors, then validate at entry with format checks and business rules. Store and model data so it is easy to govern and audit, cleanse and enrich to remove inconsistencies and fill approved values, use and monitor with KPIs and incident logs, archive and delete safely when data is no longer needed, then review what you learned and improve the standards. Each pass through the loop raises the baseline.

Building a Data Quality Management Framework

A framework turns intent into everyday practice. Link objectives to business outcomes so quality work has a clear purpose. Define standards and policies that cover rules, retention, and thresholds. Establish governance with clear roles and decision rights, and document the operational processes for validation, cleansing, and incident handling. Maintain metadata and a catalog so definitions and lineage are visible, then monitor with KPIs and dashboards that show accuracy, completeness, and freshness at a glance.

Roles and Responsibilities

People make the framework work. Data owners are accountable for specific datasets and make access decisions. Data stewards maintain quality, enforce standards, and report on KPIs. Data custodians operate the platforms and pipelines and keep them secure and reliable. Data consumers use data as designed and surface issues when they see them. Clear ownership prevents gaps and accelerates fixes.

Best Practices and Tools

Start early and keep checks close to where data enters the system. Validate at entry and at pipeline checkpoints. Maintain a single source of truth to avoid conflicting copies. Use data contracts and schema checks so breaking changes are caught before they ship. Profile data regularly to understand distributions and outliers. Automate monitoring and alerts so the team acts when KPIs drift. Treat data issues like product incidents, track them, find root causes, fix the system, and record the learning. Train teams so quality becomes a shared habit, not a side project.

The tool stack should match these habits. Profiling and observability tools help you see trends and lineage. Cleansing and deduplication tools correct errors at scale. Governance platforms manage policies, roles, catalogs, and approvals. Start with the gaps that hurt you most, then expand.

Common Challenges and How to Fix Them

Late or stale data — leads to outdated reports. Define freshness targets and monitor pipeline health to ensure timeliness.

Inconsistent data entry — creates accuracy problems. Use validation rules and controlled vocabularies to ensure uniform input.

Duplicate records — cause confusion and errors. Apply match rules and schedule regular deduplication.

Poor integration — fragments meaning across systems. Align on shared definitions, test data mappings, and use data contracts between services.

Shadow databases and spreadsheets — spread conflicting versions of truth. Retire them by giving teams easy access to trusted central sources.

Missing metadata — makes data hard to interpret and trust. Keep an updated catalog and require definitions for key fields.

Continuous Improvement and Monitoring

Set a cadence for reviews. Audit key KPIs and sample records, collect feedback from users, and run post-incident reviews so fixes improve the system, not just the symptom. Update standards when products, regulations, or sources change, and keep your checks and monitors current as new pipelines arrive. Improvement is the point of the loop, not an afterthought.

The Future of Data Quality Management

Several trends are reshaping the work. AI and machine learning can detect anomalies and predict issues before they hit reports. Real-time quality checks validate streaming data as it lands. Data observability brings monitoring, lineage, and incident response into one view. Stronger traceability makes trust easier to prove to auditors and customers. Adopt what helps your use cases, then measure the lift.

Conclusion

Data quality management protects the value of your data and the decisions built on it. Define standards, assign ownership, validate early, monitor continuously, and improve the system each cycle. The result is simple, faster work, lower risk, and better outcomes for your customers and your business.